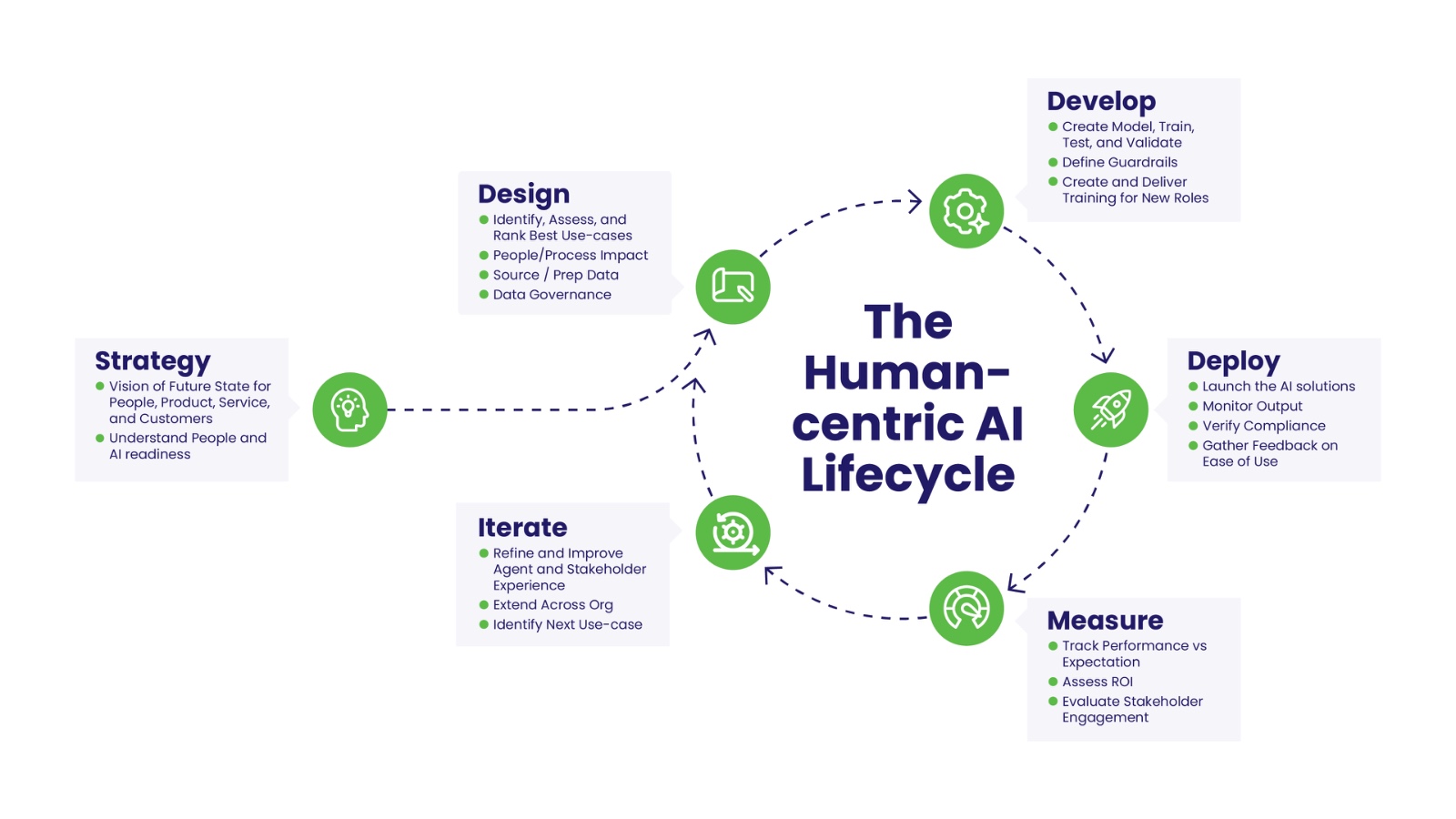

The Human-centric AI Lifecycle

Building the leadership, culture, and capability to deliver real value.

Daymark Group is a leadership development and executive coaching firm that specializes in helping leaders and organizations navigate the human side of AI adoption. We address what technology alone cannot: the leadership readiness, cultural capacity, and human capability required to make AI adoption work. Our Human-centric AI Lifecycle framework is the key to your success. Each phase is approached through a leadership and organizational development lens. We understand the technology landscape and bring trusted partners, as necessary, to address our clients’ needs.

The Human-Centric AI Lifecycle

Strategy: AI Doesn't Transform Organizations. Decisions Do.

That distinction is everything.

AI adoption means layering tools onto existing processes. AI strategy means building integrated intelligence that becomes inseparable from how your business competes. One creates busywork. The other creates advantage.

A real AI strategy answers three things:

- Why do we exist - and how does AI sharpen that purpose?

- Where will we compete - and which use cases actually move that needle?

- What will we not do - because focus is what separates scalers from experimenters?

What the Strategy phase requires:

- A clear vision for how AI aligns people, technology, data, and operations around shared outcomes

- AI and people readiness - understanding where your organization is capable of absorbing change

- Cross-functional orchestration - because AI that lives in one department stays a pilot

- A portfolio mindset - by 2030, leading organizations won't just use AI internally; they'll compete with it externally as a product in its own right

Design: Don't Automate the Work. Redesign It.

Before you build anything, audit everything.

Redesign roles, not just workflows.

- At risk - task execution that agents will take over

- Being transformed - execution shrinks, judgment expands, humans become system owners

- Emerging - new roles like agent operators, workflow architects, and AI quality auditors that are already forming inside teams, just not labeled yet

Governance isn't a checkbox. It's the foundation.

Develop: Build the Model. Build the People. Build the Guardrails.

Three things have to be built in parallel:

- 1. The model - trained, tested, and validated Creating an AI agent that actually works requires clean data, defined parameters, and rigorous validation before anything goes near production. Guardrails aren't optional; they're what separates a controlled deployment from a liability.

- 2. The people - reskilled, not just informed Map every role before you deploy. Which tasks will AI augment? Which will it automate? What new responsibilities emerge? If you can't answer those questions, you're not ready to go live.

- Don't just train for tool proficiency. Train people to ask: Why does this workflow exist? Should it still? The organizations with the highest AI adoption rates invested equally in people and technology.

- 3. The guardrails - at every level Governance sets the rules. Processes and systems enforce them. Build oversight at four levels - governance, operating model, process, and system - because the organizations that only get layers one and two right are still flying blind where it matters most.

- The leadership shift required: Move from command to context. Set expectations, model the behavior yourself, and cultivate the next generation of leaders who can navigate teams made up of both people and AI agents.

Deploy: Launch with Confidence, Not Just Speed

Runtime oversight is the difference between a successful launch and a costly one.

What that looks like in practice:

- Monitor outputs as they happen, not in a weekly review

- Verify compliance dynamically, with guardrails that enforce standards in real time

- Intervene fast when the system drifts from expected behavior

- Gather feedback from the people actually using it - ease of use signals adoption risk before it stalls

Measure: Prove the Value, Own the Outcome

Track what actually moves the business:

- People ROI - Are your employees more capable? Measure time-to-competency, internal re-skilling, and satisfaction, not just headcount reduction.

- Performance ROI - Decision velocity and outcome quality beat cost-per-transaction every time. AI users complete tasks faster and better. That's a competitive advantage story, not a cost story.

- Innovation ROI - How many ideas made it to market? Track concept-to-test speed and R&D cycle compression. Most boards never see this number. They should.

The measurement discipline that separates scalers from pilots:

The bottom line: AI ROI isn’t magic. It’s math. Define the problem, set the baseline, track relentlessly, and assign accountability before you spend the budget, not after.

Iterate: Scale What Works. Kill What Doesn't.

The pilot worked. Now what? This is where most AI programs stall. Not because the technology failed — but because the organization wasn’t built to carry it forward.

The proof-of-concept trap is real. A pilot runs fast with a small team, clean data, and no friction. Production is different. It demands infrastructure, systems integration, security reviews, compliance checks, and ongoing maintenance. The organizations that scale are the ones who plan for that gap – not the ones who discover it too late.

Iteration isn't a phase. It's a posture.

- Refining the agent based on real-world performance data, not assumptions

- Improving stakeholder experience by closing the feedback loop with the people doing the work

- Extending across the org by applying what worked in one function to the next

- Identifying the next use case before the current one loses momentum

What separates scalers from pilot collectors: Leaders who openly acknowledge gaps, reinforce new behaviors through systems and incentives, and treat transformation as an ongoing journey – not a project with an end date.

Stay Relevant